AI-generated content has grown exponentially. By early 2026, industry reports estimate that more than 60 percent of online text, marketing materials, academic submissions, and business reports include AI assistance or full generation. This surge creates a critical need for verification. Organizations must distinguish human-written work from machine-produced text to maintain trust, quality, and compliance.

AI detection software addresses this challenge directly. It analyzes written content to determine the probability that an AI model such as GPT-5, Claude 3.5, or Gemini produced it. The technology protects academic integrity, safeguards brand reputation, supports fair hiring processes, and helps content teams meet search engine quality standards.

This comprehensive guide explains what AI detection software is, how it works in 2026, compares the best tools with current accuracy data, outlines limitations, explores enterprise use cases, and provides a practical checklist for selection. It also details why many organizations now choose custom AI detection software development for superior performance.

What is AI Detection Software?

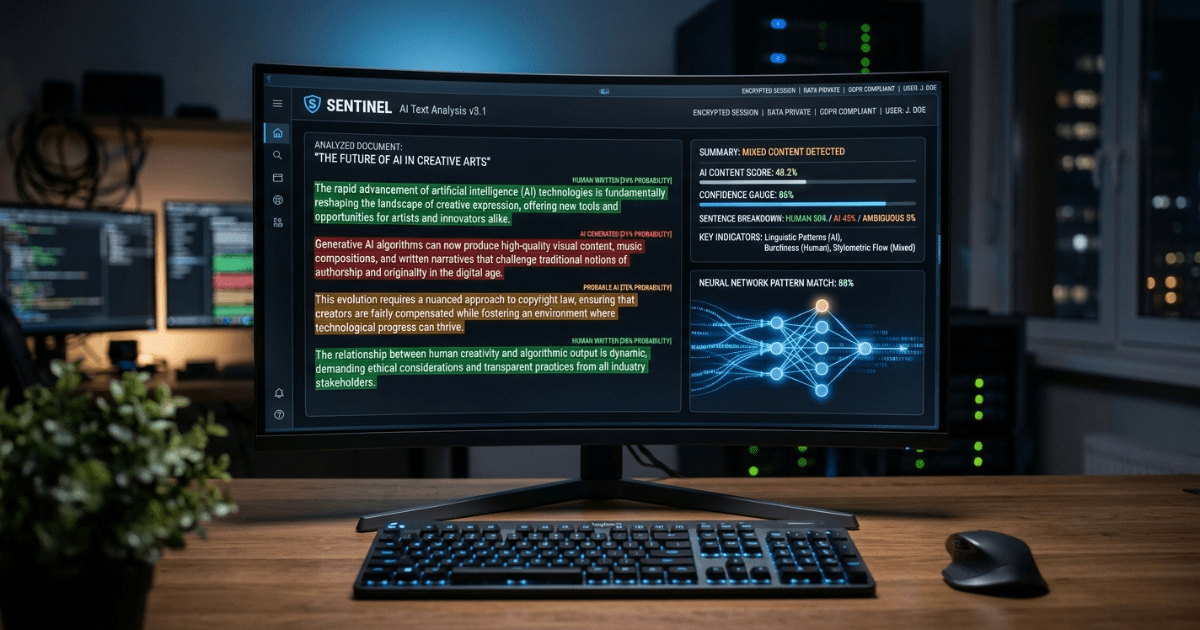

AI detection software is a specialized tool that uses machine learning models to evaluate text and calculate the likelihood it was generated by artificial intelligence rather than a human writer. In simple terms, it scans content and returns a probability score—often expressed as a percentage—indicating whether the text appears human-written, AI-generated, or mixed.

These tools go beyond basic plagiarism checkers. They examine statistical patterns unique to large language models. Modern versions in 2026 also handle mixed human-AI content, multilingual text, and even documents with images through optical character recognition. The core purpose remains the same: deliver objective, data-driven insights that help decision-makers act with confidence.

How AI Detection Software Works

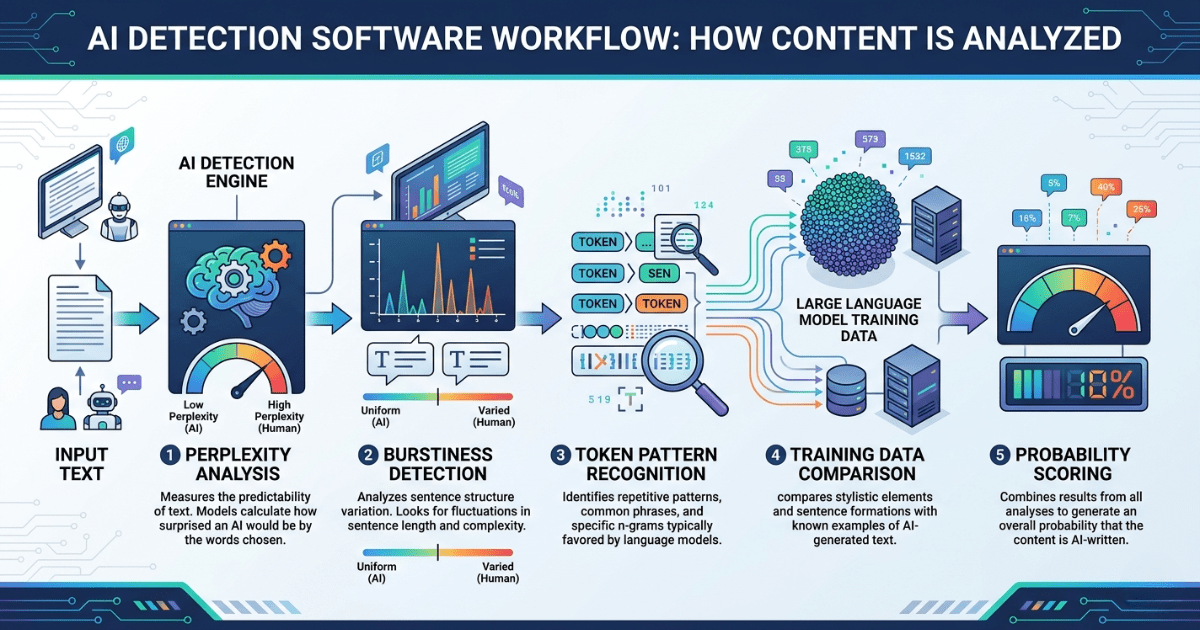

AI detection software in 2026 relies on advanced statistical analysis rather than simple keyword matching. It processes text through multiple layers of evaluation. Here are the five primary techniques that power accurate detection.

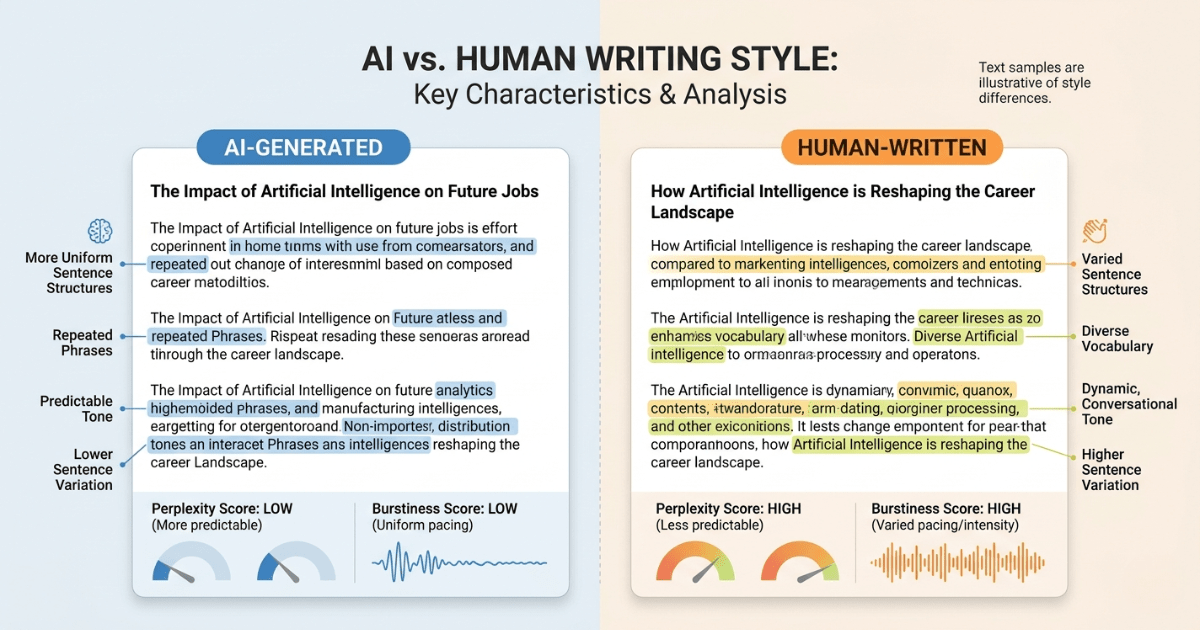

Perplexity analysis Perplexity measures how predictable a piece of text is. Large language models generate words by selecting the most probable next token based on vast training data. This creates consistently low-perplexity output—smooth and statistically expected. Human writing shows higher perplexity because people make less predictable choices, include personal quirks, or shift tone abruptly. Detectors calculate average perplexity across sentences and flag unusually low scores as AI-like.

Burstiness detection Burstiness examines variation in sentence length, complexity, and structure. AI models tend to produce uniform paragraphs with similar sentence lengths and rhythm. Human writers naturally alternate short punchy sentences with longer, more detailed ones. Low burstiness signals machine generation. In 2026 tools, this metric is refined with deep learning to detect subtle patterns even after light editing.

Token pattern recognition Every AI model leaves characteristic token fingerprints. Detectors compare sequences of words and phrases against known patterns from models like GPT-5 or Claude. They identify repeated transitional phrases, overly consistent vocabulary distribution, or unnatural repetition rates that humans rarely replicate exactly. Advanced systems in 2026 use ensemble models that cross-reference hundreds of base language models simultaneously.

Training data comparison Detection models are trained on massive datasets containing both verified human writing and AI-generated samples from the latest models. The software compares incoming text against this knowledge base. It identifies statistical deviations that align more closely with AI training distributions than human ones. Continuous retraining keeps detectors current as new AI models emerge.

Probability scoring All signals feed into a final probability engine. The system assigns a score—typically 0 to 100 percent—representing the chance the text is AI-generated. Many tools now provide sentence-level breakdowns, highlighting specific phrases that triggered flags. Some add confidence intervals and explainability reports to reduce ambiguity for users.

These five components work together in real time. Processing a 1,000-word document takes seconds on modern cloud infrastructure while delivering layered, explainable results.

Best AI Detection Software in 2026

Several tools lead the market in 2026 based on independent benchmarks, user adoption, and real-world testing. The table below compares five standout options across accuracy, key features, and ideal use cases.

| Tool Name | Accuracy | Key Features | Best For |

|---|---|---|---|

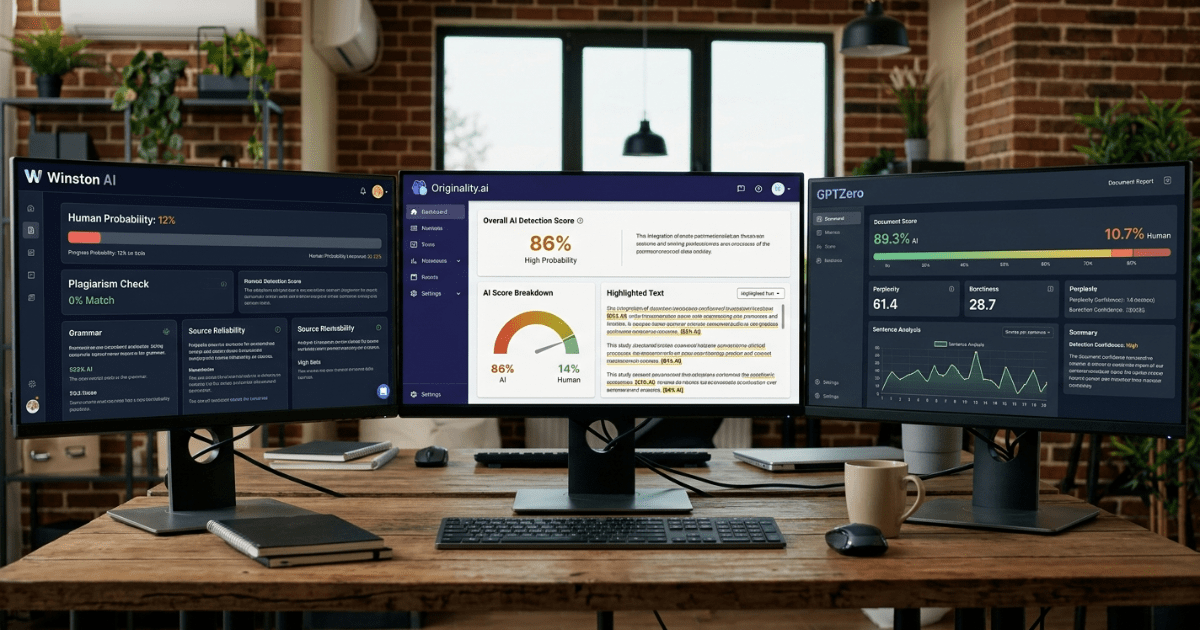

| GPTZero | 99% | Sentence-level highlighting, Writing Replay video, plagiarism checker, ESL-friendly low false positives, browser extension | Educators, students, K-12 and universities |

| Winston AI | 98% | OCR document scanning, Google Classroom integration, readability scoring, multilingual support | Education institutions, SEO agencies, professional reports |

| Originality.ai | 99% | Combined AI + plagiarism detection, full-site scanning, detailed reporting dashboards | Content teams, SEO agencies, publishers, marketing departments |

| Copyleaks | 99%+ | Enterprise LMS integration, 30+ language support, code detection, API-first architecture | Large enterprises, compliance teams, multinational organizations |

| Turnitin | 98% | Deep learning management system integration, academic integrity focus, batch processing | Academic institutions and universities with existing LMS |

These tools represent the current state of the art. GPTZero and Copyleaks consistently rank highest in large-scale benchmarks for raw detection power. Originality.ai excels for commercial content workflows. Winston AI and Turnitin dominate education due to seamless integrations and specialized features. Selection depends on volume, integration needs, and industry focus.

Accuracy Comparison of AI Detection Tools

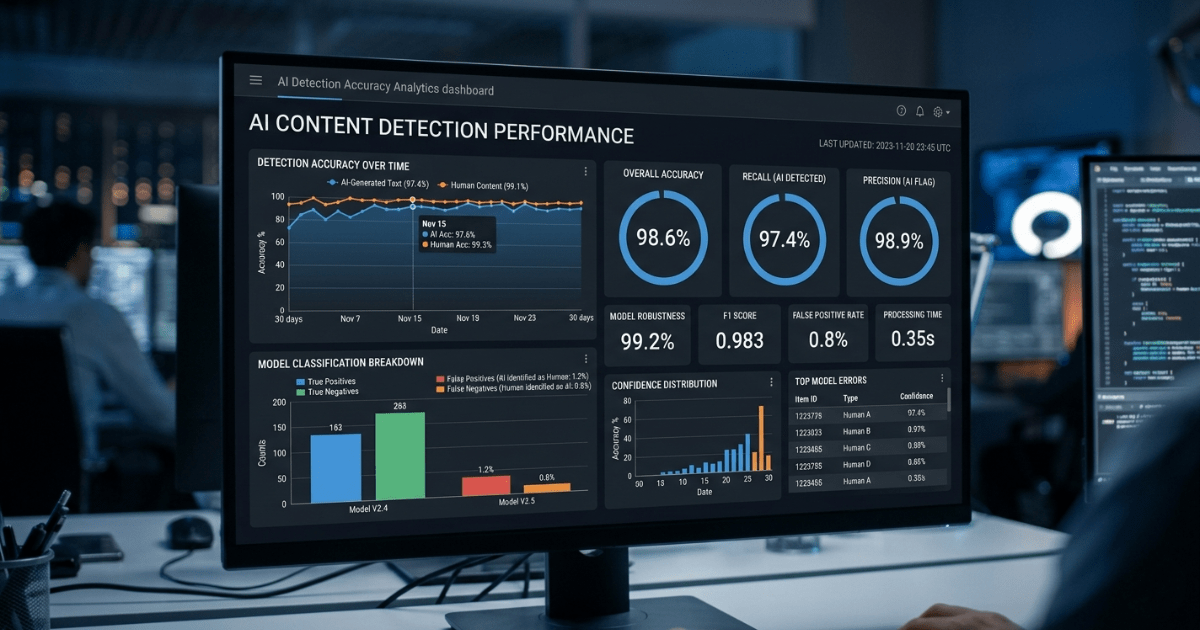

Top AI detection tools in 2026 achieve impressive accuracy ranges between 95 percent and 99 percent on unmodified AI-generated text from current models. Independent tests on GPT-5, Claude 3.5, and Gemini outputs show GPTZero and Copyleaks reaching 99 percent true positive rates in controlled conditions. Originality.ai follows closely at 99 percent across mixed-content scenarios.

Real-world accuracy drops when text undergoes human editing. Light paraphrasing or added personal anecdotes can reduce detection rates to 70-85 percent. Heavily revised AI content falls further. Tools maintain strong performance on long-form content (over 500 words) but struggle more with very short passages.

False positives remain the primary challenge. Non-native English speakers face higher false positive rates—sometimes exceeding 50 percent in older studies—though 2026 versions like GPTZero have reduced this to under 5 percent for ESL writing through dedicated fine-tuning. Native human writing with formal or repetitive styles can still trigger flags at 1-3 percent rates.

Challenges include rapid model evolution. New AI releases require immediate detector updates. Mixed human-AI collaboration documents create ambiguity that no single score fully resolves. Despite these issues, leading tools provide reliable decision support when combined with human review.

Limitations of AI Detection Software

No AI detection tool is perfect. Organizations must understand the following realistic limitations before full reliance.

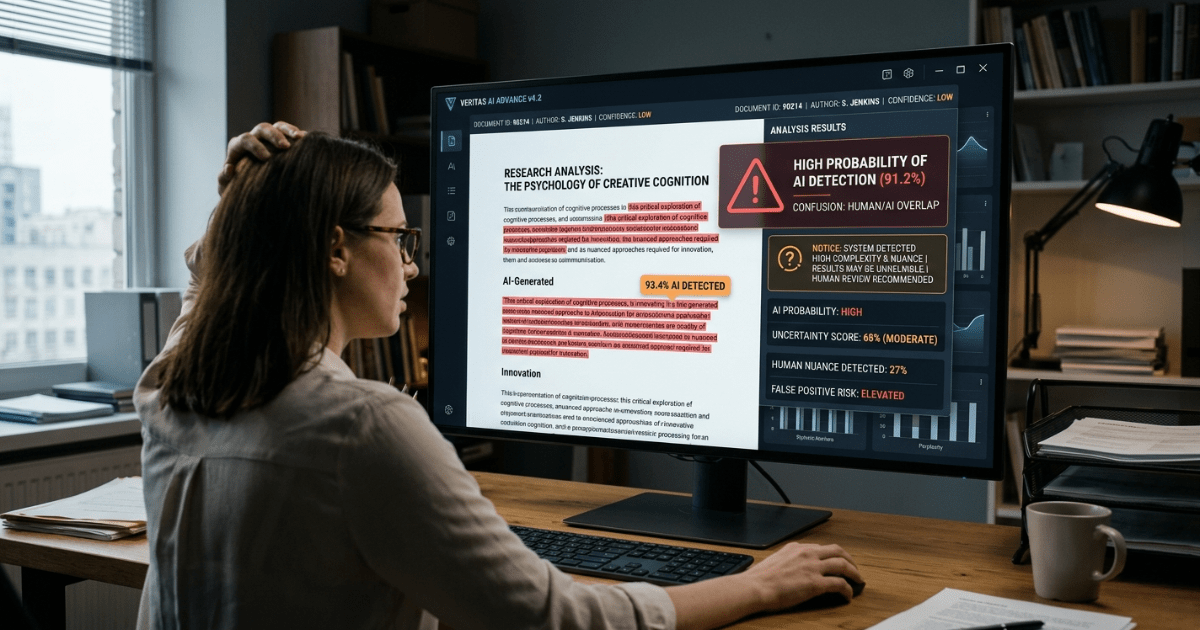

First, detection is probabilistic, never 100 percent certain. Even the best systems return likelihood scores rather than definitive yes-or-no answers. Overconfidence in borderline results leads to errors.

Second, false positives disproportionately affect certain groups. Non-native English writers, neurodivergent individuals, and those using formal academic styles receive higher flags. This bias requires careful policy design in education and hiring.

Third, heavily edited or humanized AI content often evades detection. Tools that rewrite output with humanizers, add personal stories, or vary sentence structure significantly reduce accuracy. Adversarial prompting techniques continue to improve.

Fourth, short-form content under 200 words yields unreliable results. Detectors need sufficient text to establish statistical patterns. Brief social media posts or single paragraphs remain difficult to assess accurately.

Fifth, integration and cost barriers exist for smaller organizations. Enterprise-grade tools require API access, training, and sometimes custom setup that exceeds budgets for freelancers or small teams. Data privacy concerns also arise when uploading sensitive documents to third-party cloud platforms.

Transparency about these limitations helps set realistic expectations and encourages responsible use alongside human judgment.

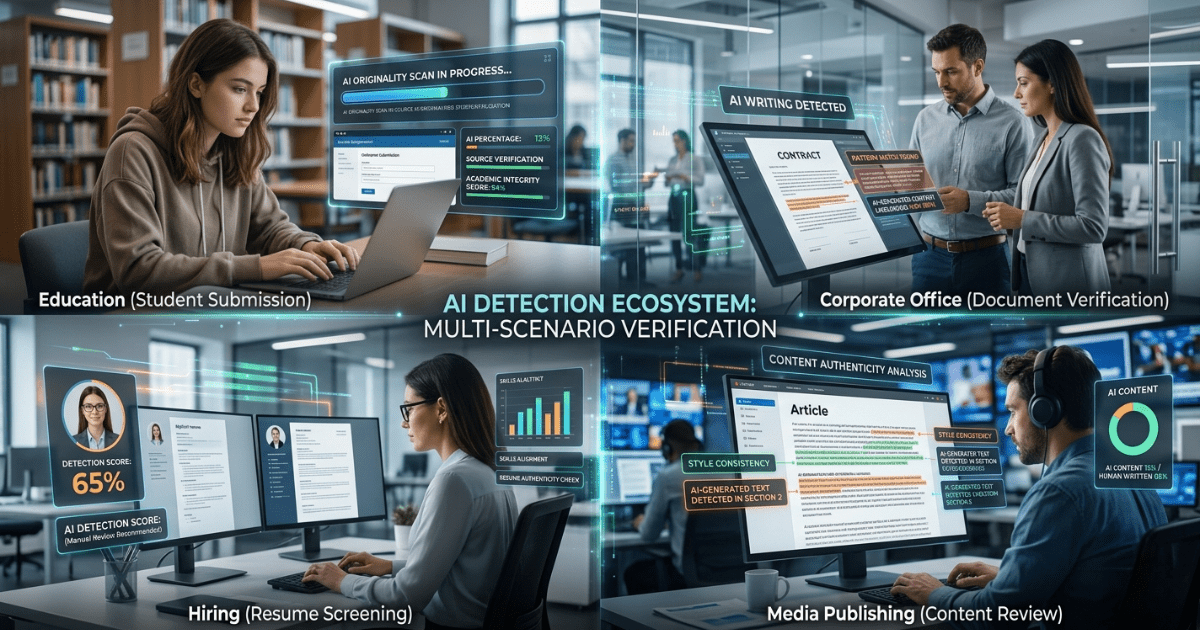

Enterprise Use Cases of AI Detection Software

AI detection software delivers measurable value across multiple sectors in 2026. Here are the primary enterprise applications with practical context.

Education Universities and schools use detectors to uphold academic integrity. Tools integrated with learning management systems scan submissions in real time. Educators receive highlighted reports and writing process replays that distinguish original student work from AI drafts. This supports fair grading and teaches responsible AI use rather than outright punishment.

Enterprises Large companies verify internal reports, proposals, and customer communications. Marketing teams ensure brand voice consistency. Legal departments confirm contract language originates from authorized humans. Compliance teams use bulk scanning to audit thousands of documents daily, reducing risk of misleading AI-generated statements.

Hiring Recruiters and HR departments scan resumes, cover letters, and assessment responses. AI detection flags fabricated achievements or generic responses that undermine candidate evaluation. Combined with skills testing, it creates fairer hiring processes and reduces time wasted on inauthentic applications.

Media News organizations and publishers maintain journalistic standards. Fact-checking teams verify source material and draft articles. Editorial workflows include mandatory detection passes before publication. This protects reputation and meets growing reader demand for transparent content labeling.

SEO and content marketing Agencies and in-house teams audit client websites and blog networks. Search engines continue prioritizing helpful, original content. Detection tools help identify and revise low-quality AI spam that risks ranking penalties. Content strategists use reports to guide human revision and maintain E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals.

Each use case benefits from tailored workflows that combine automated detection with human oversight.

How to Choose the Right AI Detection Software

Selecting the appropriate tool requires systematic evaluation. Follow this checklist to avoid common mistakes.

Features to look for:

- Sentence-level highlighting and explainability reports

- Integration with existing platforms (Google Workspace, LMS, CMS)

- Multilingual and OCR support

- Low false-positive rates for diverse writers

- Bulk processing and API access for scale

- Regular model updates and transparency reports

- Detailed analytics dashboards

Mistakes to avoid:

- Relying solely on a single percentage score without reviewing highlights

- Choosing tools without proven low false-positive performance on your specific content type

- Ignoring data privacy and compliance certifications

- Selecting free tools for high-stakes enterprise use without testing accuracy

- Failing to pilot multiple options with your real documents before committing

Test at least three tools with your own sample content. Measure both accuracy and workflow fit. Consider total cost of ownership, including training and support.

Custom AI Detection Software Development

Off-the-shelf AI detection tools work well for many organizations but fall short for enterprises with unique requirements. Generic models cannot fully adapt to specialized vocabulary in legal, medical, or technical fields. They may lack seamless integration with proprietary systems or fail to meet strict data residency regulations.

Custom AI detection software development solves these gaps. Businesses gain models trained on their own document corpus for dramatically higher domain-specific accuracy. They control training data entirely, eliminating privacy risks associated with uploading sensitive content to public platforms.

Key benefits include:

- Tailored accuracy that exceeds 99 percent within specific industries through custom fine-tuning

- Seamless API integration with existing CRM, ERP, or content management systems

- Scalable architecture that handles millions of documents without performance degradation

- Full compliance with GDPR, HIPAA, or industry-specific standards

- Continuous learning capabilities that adapt automatically to new AI models

- Advanced features such as multimodal detection combining text, images, and metadata

Enterprises that invest in custom solutions report faster decision-making, reduced manual review time, and stronger competitive advantage through proprietary detection intelligence.

For organizations ready to move beyond limitations of commercial tools, custom development delivers long-term value and complete control.

Get a Free Consultation for Custom AI Detection Software Development.

Partner with Dubai It Consulting, a leading AI-powered software development company since 2009. Their experts specialize in building scalable, secure, and highly accurate custom AI detection systems tailored to your exact needs. From machine learning model development and NLP integration to enterprise-grade deployment and ongoing optimization, Dubai It Consulting delivers solutions that transform content verification processes.

Contact Dubai It Consulting today to schedule your free consultation and discover how custom AI detection software can give your organization a decisive edge in 2026 and beyond.

Conclusion

AI detection software has matured into an essential enterprise capability in 2026. It combines sophisticated statistical analysis with practical usability to help organizations verify content authenticity at scale. Understanding how these tools work, comparing leading options, and recognizing their limitations enables informed adoption.

The importance of reliable detection will only grow as AI content volume increases. Organizations that act now whether through proven commercial tools or strategic custom development—gain clear advantages in quality control, compliance, and trust.

For maximum performance and complete control, custom AI detection software stands out as the superior long-term solution.

FAQs

Is AI detection software 100% accurate?

No. Even the most advanced tools in 2026 reach 95-99 percent accuracy under ideal conditions. Real-world performance varies with editing, text length, and language. Treat results as strong probability indicators rather than absolute proof, and always combine with human review.

Can AI detection detect ChatGPT content?

Yes. Leading tools reliably identify content from ChatGPT, GPT-5, and similar models. They analyze statistical patterns specific to these systems. Detection remains effective even after minor edits, though heavy human rewriting reduces confidence scores.

Which AI detection tool is best?

The best tool depends on your use case. GPTZero leads for education, Originality.ai for content marketing, and Copyleaks for enterprise scale. Test multiple options with your actual documents to determine the strongest fit for accuracy, integration, and workflow needs.

Is AI detection reliable?

Yes, when used responsibly as part of a broader verification process. Top tools provide consistent, explainable results with low false-positive rates in 2026. Reliability improves further with custom development and regular human oversight. No single tool replaces judgment, but modern detectors deliver trustworthy decision support.